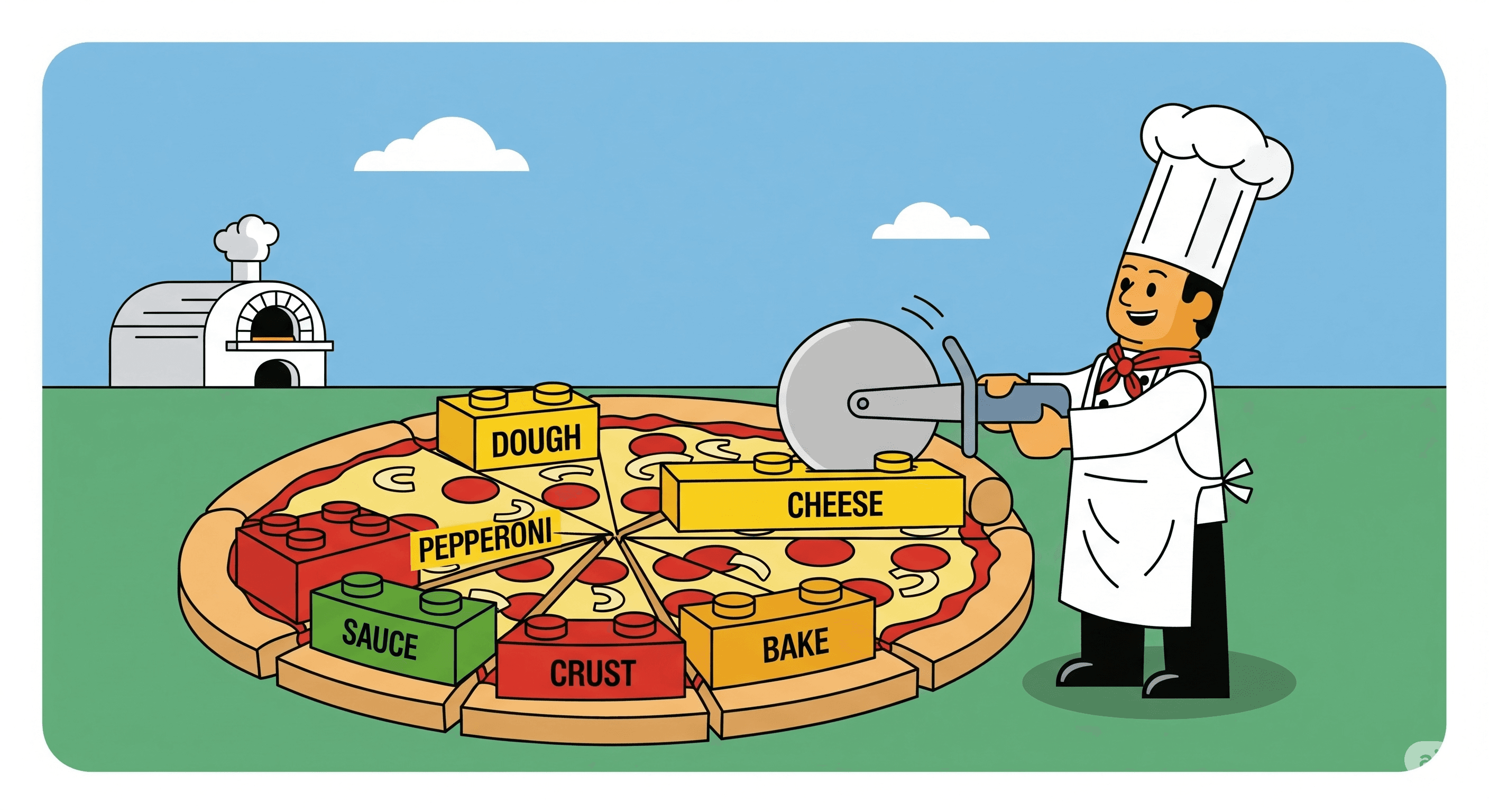

Tokenization: Breaking Text into Tiny LEGO Bricks of Meaning

Introduction If you’ve ever tried to explain a song to someone by humming just parts of it, you already get the idea of tokenization. It’s the process computers use to break down big chunks of text into smaller, manageable pieces — called tokens — so...

Aug 13, 20253 min read3