Tokenization: Breaking Text into Tiny LEGO Bricks of Meaning

Introduction

If you’ve ever tried to explain a song to someone by humming just parts of it, you already get the idea of tokenization. It’s the process computers use to break down big chunks of text into smaller, manageable pieces — called tokens — so they can understand and work with them.

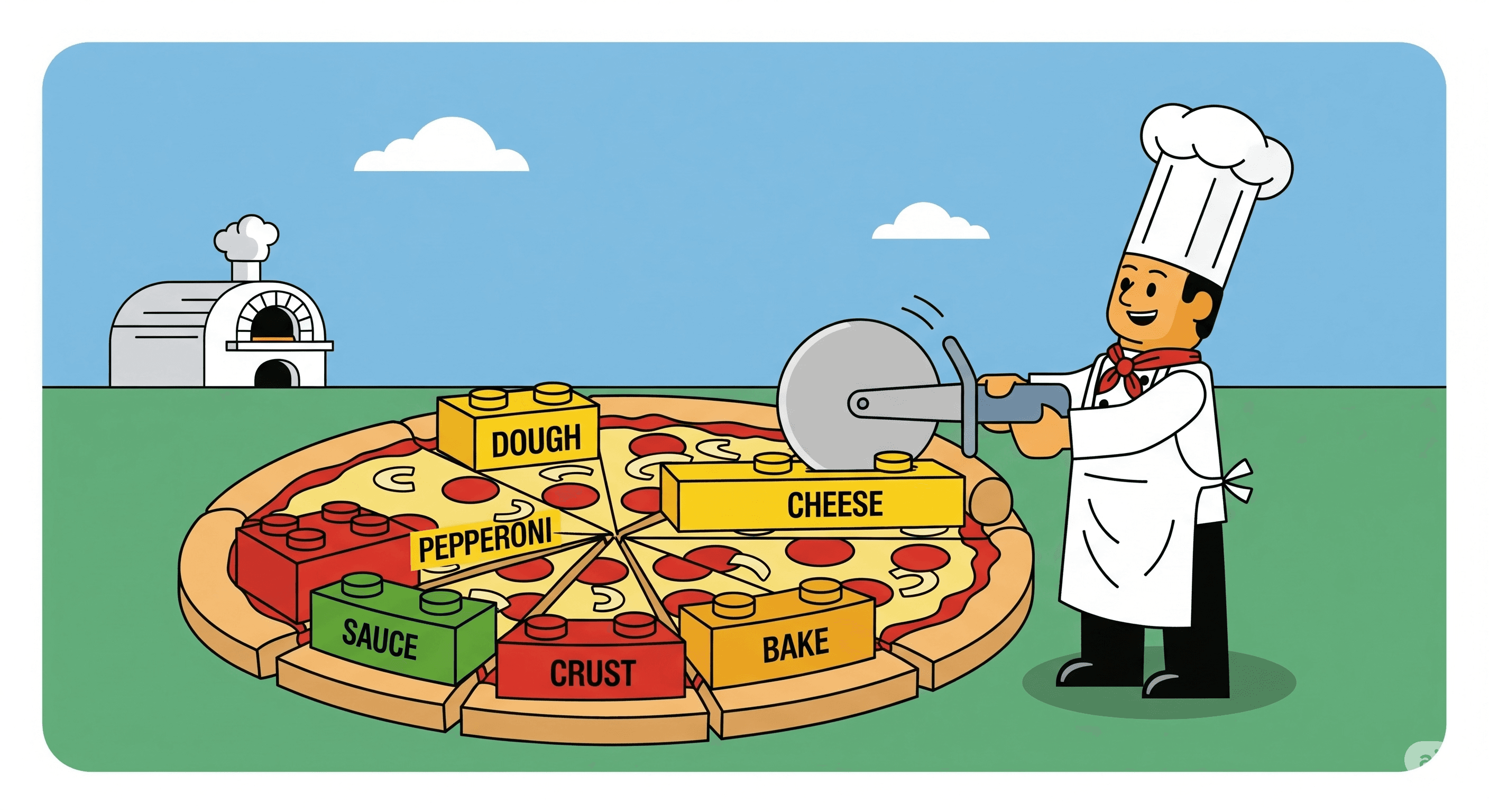

In NLP (Natural Language Processing), tokenization is like cutting a big pizza into slices. You can’t just eat the whole pizza in one bite (well, unless you’re a python 🐍)… you slice it into parts you can handle.

1. What is Tokenization?

Tokenization is the process of splitting text into smaller units — these units can be words, subwords, characters, or even symbols.

For example:

Text: "I love JavaScript."

Tokens: ["I", "love", "JavaScript", "."]

Think of it as turning a big paragraph into tiny “meaning blocks” that a machine can process.

2. Why Do We Need Tokenization?

Computers don’t understand human language directly. They work with numbers. Tokenization is the first step in converting human-readable text into something the machine can handle.

It helps because:

It makes pattern recognition easier.

It reduces complexity.

It speeds up processing in NLP tasks.

Without tokenization, a model like GPT would be staring at a giant blob of characters and saying, “Uh… what?”

3. Types of Tokenization

a) Word Tokenization

Breaks text into words.

Example:

arduinoCopyEdit"Python is cool." → ["Python", "is", "cool", "."]

b) Subword Tokenization

Breaks uncommon words into smaller known parts.

Example:

arduinoCopyEdit"Unhappiness" → ["Un", "happiness"]

This is common in models like GPT to handle rare words better.

c) Character Tokenization

Every single character becomes a token.

Example:

arduinoCopyEdit"Hi!" → ["H", "i", "!"]

4. How Tokenization Works in AI

In modern NLP models:

Your text is split into tokens.

Each token is assigned a unique ID in the vocabulary.

These IDs are fed into the model as numbers (embeddings).

The model does the math-magic to understand meaning and generate responses.

Example (using a made-up vocabulary):

cssCopyEdit"I love AI" → [12, 55, 88]

5. A Fun Analogy

Think of building a sentence like building a LEGO castle:

Tokens = LEGO bricks.

Vocabulary = all possible LEGO shapes you can use.

Model = the person building something with those bricks.

If you don’t break the set into individual bricks, you can’t build anything useful.

6. Where You’ll See Tokenization

Chatbots

Search engines

Spell checkers

Translation apps

Speech-to-text systems

Basically, any time a machine deals with human language, tokenization is happening behind the scenes.

Conclusion

Tokenization is the bridge between human words and machine understanding.

It’s not just about splitting text — it’s about structuring language so machines can process, analyze, and respond meaningfully.

Next time you type something into an AI tool, remember: before the AI “understands” you, it’s chopping your words into neat little blocks called tokens.