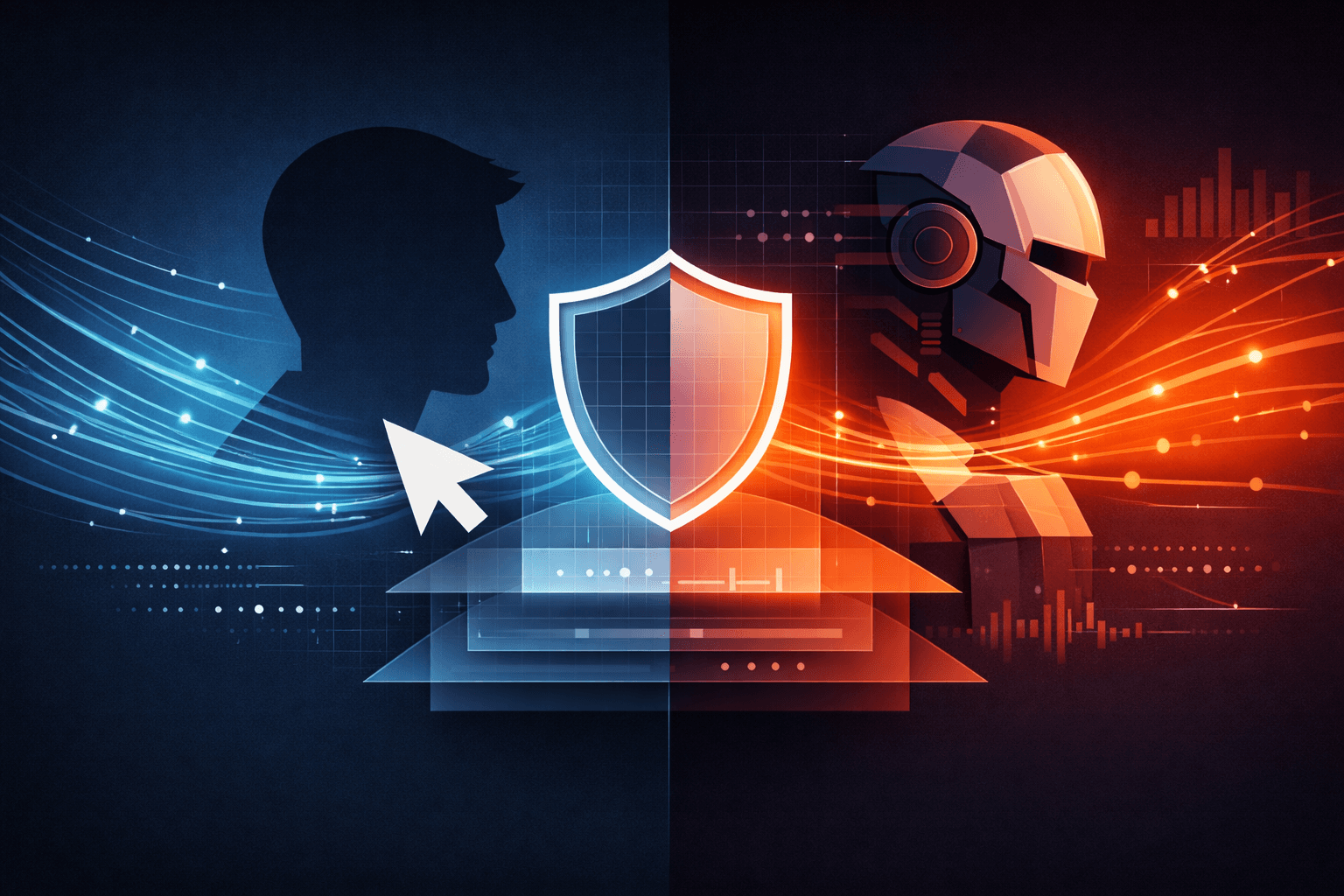

How to Spot and Block Bots on Your Website

Modern websites are constantly interacting with automated programs. Some of these bots are useful, like search engine crawlers. Others are not. They scrape data, spam forms, brute-force logins, or abuse APIs.

The challenge is not just blocking bots, but doing so without hurting real users. This article explains how to identify automated interactions, how detection works under the hood, and how to mitigate bots in a balanced, practical way.

Understanding the Problem First

Before talking about tools, it helps to understand a basic truth:

Bots behave differently from humans because they don’t have uncertainty.

Humans hesitate, scroll randomly, make mistakes, and act inconsistently. Bots are optimised for speed, repetition, and precision. Almost all bot-detection strategies rely on observing this difference.

Indicators of bot activity

Bot detection usually starts with recognising patterns that are statistically unlikely for humans.

Unnatural request patterns

A common red flag is request frequency. A human user might generate a few requests per second at most. Bots generate hundreds.

For example:

A user browsing a product page might load the page, scroll, and click once.

A bot scraping prices might hit the same endpoint repeatedly with no delay.

Consistency is also suspicious. Humans are irregular. Bots often operate on fixed intervals like every 200 ms.

Strange session behaviour

Looking at the entire session instead of individual requests reveals more clues:

Sessions that last only a fraction of a second but perform meaningful actions.

Sessions that never navigate between pages

Sessions that repeatedly trigger the same endpoint (login, search, API calls)

A real user explores, whereas a bot targets.

Input behaviour that feels “too perfect”

Forms are a goldmine for detection:

Humans take time to type

Humans make mistakes and use backspace

Humans don’t fill hidden fields

Bots often submit forms instantly, perfectly, and sometimes fill fields that are invisible to users. That difference alone can identify a large percentage of automated traffic.

How detection actually works

From simple rules to behavioural intelligence

Rule-based detection

This is the simplest layer and often the first one implemented.

Examples:

Block more than 100 requests per minute from a single IP

Throttle repeated failed login attempts

Reject requests with malformed headers

This works well against basic bots but fails against more advanced automation using proxies or headless browsers.

Behavioral analysis

This is where detection becomes more reliable.

Instead of asking “Who is this user?”, the system asks:

How long did they take before clicking?

Did they scroll naturally?

Do they move the mouse like humans?

Is there variation in timing?

These signals are difficult to fake at scale. Even advanced bots struggle to replicate human randomness consistently.

Fingerprinting

Fingerprinting combines multiple browser and device attributes to create a probabilistic identity:

User agent

Screen size

Time zone

Rendering quirks (canvas, WebGL)

One attribute alone proves nothing. But when hundreds of sessions share an identical fingerprint, automation becomes the most reasonable explanation.

Third-party bot detection platforms

Services like Cloudflare or Google reCAPTCHA use machine learning models trained on massive datasets.

Instead of a binary decision, they assign a risk score indicating how likely a session is to be automated. This allows websites to react proportionally instead of blocking blindly.

Prevention and mitigation

Stopping bots without punishing real users

The goal is not to eliminate all bots. That’s unrealistic. The goal is to reduce harmful automation while preserving user experience.

Layered defenses

Effective protection is layered, not aggressive.

Monitor first

Log suspicious behaviour before blocking. False positives are expensive.Slow down suspicious traffic

Rate limiting or artificial delays frustrate bots without affecting humans much.Challenge only when needed

CAPTCHAs should appear only when behaviour crosses a risk threshold.

Block repeat offenders

IPs, ranges, or ASNs that repeatedly abuse the system can be denied entirely

Smart use of CAPTCHA

CAPTCHAs are useful, but overuse destroys user trust.

A good approach:

No CAPTCHA for normal browsing

CAPTCHA after multiple failed logins

CAPTCHA on high-risk actions like password resets or bulk submissions

Modern invisible CAPTCHA systems score behaviour silently and only intervene when confidence is low.

Protecting APIs and sensitive endpoints

Bots love unprotected APIs.

Mitigation includes:

Authentication tokens

Strict request validation

Short-lived credentials

Rate limits per user, not just per IP

If a endpoint doesn’t need to be public, it shouldn’t be.

Risk-based decision making

Advanced systems assign each session a score instead of a verdict.

For example:

Low risk: allow normally

Medium risk: monitor or throttle

High risk: challenge or block

This keeps defenses flexible and minimises harm to legitimate users.

Conclusion

Bot detection is not about catching robots. It’s about understanding behaviour.

Humans are inconsistent, slow, and imperfect. Bots are fast, repetitive, and precise. By observing these differences across requests, sessions and interactions, websites can reliably detect automation without relying on guesswork.

The best systems:

Combine multiple weak signals instead of trusting one

Respond proportianally instead of aggressively

Prioritise user experience as much as security

In practice, effective bot mitigation feels invisible to real users and exhausting to bots.

Want more?

Blog: https://blogs.kanishk.codes/

Twitter: https://x.com/kanishk_fr/

LinkedIn: https://linkedin.com/in/kanishk-chandna/

Instagram: https://instagram.com/kanishk__fr/