Vector Embeddings: How Computers Give Meaning to Words

Introduction

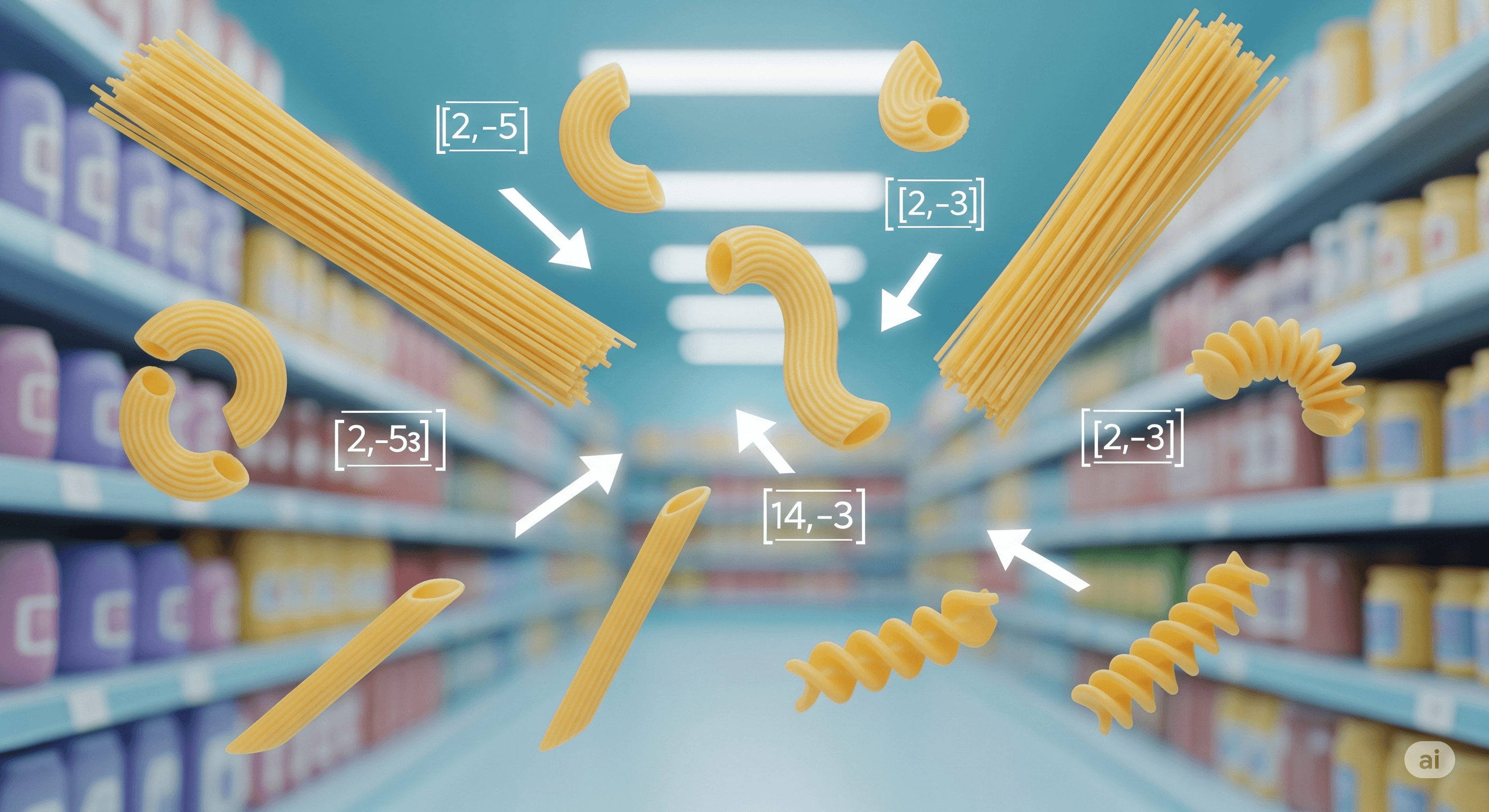

Imagine you’re at a grocery store and you ask,

"Where’s the pasta?"

Even if you didn’t say “spaghetti” or “macaroni,” the store worker gets it — they know pasta is related to those things.

Computers need a way to make similar connections between words. That’s where vector embeddings come in. They turn words (or sentences, or images) into lists of numbers that capture meaning, so a computer can tell which ones are related — even if they’re not exactly the same word.

1. What Are Vector Embeddings?

In human terms:

A vector embedding is just a list of numbers that represents an idea.

Example:

arduinoCopyEdit"Cat" → [0.12, -0.98, 0.33, ...]

"Dog" → [0.10, -0.95, 0.29, ...]

These numbers are chosen so that similar things have similar numbers.

2. Why Do We Use Embeddings?

Because computers don’t “understand” words, they understand math.

If we can turn “apple” into numbers, we can compare it with “banana” and see that they’re more similar than “apple” and “car.”

Real-life example:

Spotify recommending songs that sound like the one you love.

Google showing related search results.

ChatGPT understanding that “I’m feeling blue” means “I’m feeling sad,” not that you’re literally changing colors.

3. How It Works

Training on a large dataset: The AI reads massive amounts of text.

Capturing context: It learns that “king” and “queen” appear in similar contexts, so their vectors are close in “word space.”

Positioning in multi-dimensional space: Think of each word as a dot on a giant invisible map — closer dots mean similar meanings.

4. The “Word Map” Analogy

Picture a 3D map where:

All fruits cluster in one corner.

All animals in another.

Within animals, cats are closer to tigers than to cows.

Embeddings are basically coordinates on this map.

5. Cool Tricks You Can Do With Embeddings

Find Similarity: “cat” is close to “kitten.”

Analogies: “King - Man + Woman = Queen.”

Search: Find documents or images related to a given query.

Conlusion

Vector embeddings are like giving computers a sense of meaning.

Instead of just matching exact words, they let machines understand relationships between concepts — just like you understand that “pasta” and “spaghetti” belong in the same aisle.

So, next time you see a smart recommendation or search result, remember: there’s probably an embedding map behind the scenes making those connections.